35 minutes

Quantifying curation

Updates

11/18/21: Added section on satisficing.

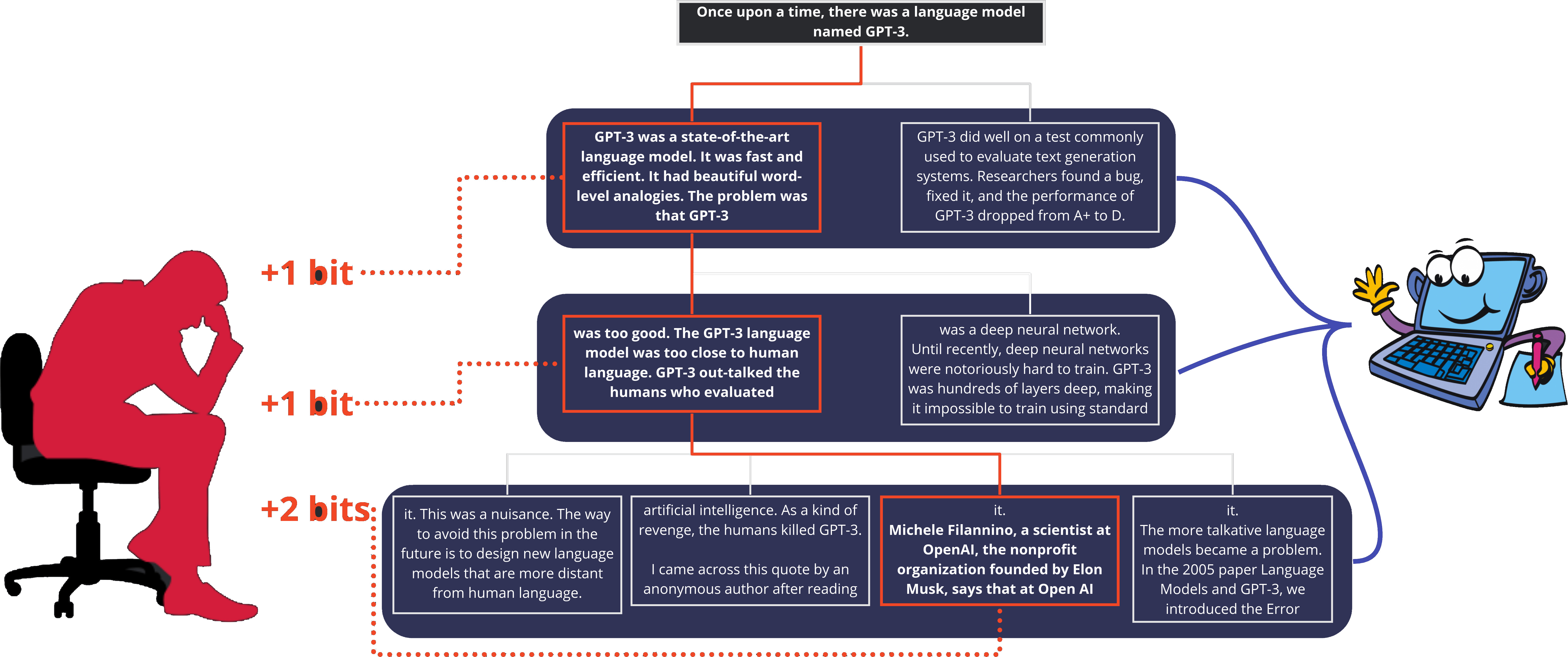

A human curator administers selection pressure to GPT-3’s outputs

A human curator administers selection pressure to GPT-3’s outputs

Previously, I tagged content generated collaboratively with GPT-3 with a curation ratio, intended to give an approximate sense of the amount of cherrypicking involved in its creation. Others have similarly used a ratio to indicate curation selectivity. However, this description doesn’t distinguish between, say, choosing the best of 5 entire essays generated by GPT-3 and choosing the best of 5 sentences every sentence. The latter text has received much more optimization pressure per token and is likely to look a lot more coherent.

Gurkenglas made the excellent suggestion that I track the number of bits of selection for an objective and exact measure of human intervention. Normally, this would be a lot of trouble, but fortunately Loom trees contain sufficient information to retroactively calculate bits of selection and intervention. From now on, I will label content that is generated with the assistance of GPT-3 or other language models with the metrics presented in this post.

Motivation (and why we need HITL to understand language models)

Unclearly labeled cherry picking of GPT-3 demos has incited criticism and skepticism of the “hype” surrounding GPT-3. (1, 2)

It is important that demos accurately represent the power of these systems, as not everyone has the access, time, or initiative to play with language models firsthand. At the same time, there are excellent reasons to show off curated demos. It is an interesting and unintuitive property of large language models that their stochastic completions to the same prompt can vary from nonsense to super-human1 – we might instead expect an AI of infra-human capability to consistently produce infra-human content, the way a person with a weak understanding of a topic is unlikely to say something “accidentally” indistinguishable from an expert. But the learning curves of language models have very different properties than that of humans.

Currently, only curated samples can reveal the fact that human-level or superhuman completions can be so efficiently located by a language model. This is especially true for content longer than a couple paragraphs or so (which I find to be around the expected “coherence length” of GPT-3, though that varies a lot by domain), since language models have a nonzero probability of spouting nonsense or going off track at any point, and incoherence tends to be asymmetrically detrimental.

It would be nice if people could share curated samples (which contain valuable evidence about language models in addition to having artistic/entertainment value) without having to worry about misrepresenting the capabilities of language models. The solution is to use objective metrics! Not only are labeled samples not misleading, in combination with their curation metadata they can provide even more valuable information about the capabilities of language models than unfiltered samples.

Best-of ratios are ambiguous (if a GPT-3 generated article was “best of 3,” does that mean 3 articles were generated and the best one chosen, or did the human curator incrementally generate the article paragraph by paragraph, choosing best of 3 at each step?) In contrast, the metrics I propose measure the total (or average) quantity of information contributed by the curation process, which depends on both the branching factor and number of branching points.

As I’ll elaborate on below, these metrics tell us (in the sense of Bayesian information gain) how much a language model would need to improve in order to perform as it does with curation. They also suggest some very interesting experiments such as quantitatively estimating qualitative scaling laws and extrapolating them to predict the quality of future language models.

Theory

What do we actually mean when we ask how “curated” a sample is?

If the sample resulted from a human picking the best of n completions generated by a language model, the curatedness of the sample corresponds to how many “tries” a language model needs in order to generate a sample of that quality. But what if the human doesn’t just choose once between n completions, but repeatedly, building up the sample incrementally? That’s clearly exerting more selectivity than if the human only chose once between completed samples.

What if the human is not only selecting between completions but manually intevening in the text by adding, replacing, or deleting words? Is there any hope of an objective measure of curation in those cases? It may seem like arbitrary interventions on generated text are “cheating” and ought to be strictly disallowed in “serious” demos. I would agree with that sentiment were there not an exact, consistent way to measure the influence of human intervention which can take such interventions into account – and indeed, the results of the proposed method confirm the intuition that it’s cheating: intervention-actions tend to inflict much more optimization that selection-actions (discussed here). A formalism which can account for more general forms of “curation” allows us to analyze a greater set of examples, such as AI Dungeon games, which usually involve not only selection but also correcting/modifying what the AI says and freeform interjections from the player. Such freeform interactions provide valuable information not only about the way that humans interact with language models, but also capabilities of these models which are otherwise difficult to probe.

The approach to quantifying curation that I suggest is capable of accounting for arbitrary types of meddling because it treats the curator as a black box optimization process and cares only about the effect of the process on the probability of outcomes, regardless of internal implementation.

What we seek to measure is stated precisely in these terms: How much more likely is this sample to have been written given that curation was in play than it would have been without curation? Intuitively, this tells us how much the sample must be “credited” to curation and not just the underlying generator. This idea of probability “magnification” also has many nice, known mathematical properties, being related to the heart of machine learning and Bayesian analysis.

Content warning: The rest of this section (Theory) and the next section (Methods) contain formulas, which may be confusing or boring for some readers; skip to Applications for qualitative discussion.

Probability magnification

Let’s say that curation amplifies the probability of the selected sample by a factor of M:

M(curation | generator) = pcurated / pgenerator

Where pcurated is the probability of writing the sample with curation and pgenerator is the probability of writing the sample without curation. (Note: M is not a probability but a ratio of probabilities.)

Probability magnification can be visualized as a literal measure of the amount of zooming-in on the probability mass of certain outcomes. Loom’s “wavefunction” mode allows you to click to “zoom in” to sections of the future multiverse, renormalizing the probability of the outcome to 1 - that is, deterministically selecting the outcome:

The bottom of the screen displays the change in magnification and total magnification (and bits, which I’ll talk about shortly) after each zoom

The bottom of the screen displays the change in magnification and total magnification (and bits, which I’ll talk about shortly) after each zoom

You may also think of the sample as a target hypothesis, and the magnification to describe the multiplication in likelihood of that hypothesis being located if curation is used.

If the curation method always results in the sample being located, as is the case in the above gif and when interjecting a word, then the numerator is 1. How could pcurated ever be less than 1, given that we only ever see a sample when in actuality it was written? The thing to understand is that this is a prior probability. Choosing the best of n completions doesn’t result in a particular outcome with certainty, even though it always produces some particular outcome, since in another rollout the generator would have probably generated n different options, but manually writing a sentence or selecting a token from a deterministic list of top tokens does (at least given a deterministic model of the curator).

Probability magnification vs unlikelihood

Possibly you’re thinking (perhaps after seeing the zooming visualization): Wait, isn’t magnification just equal to the reciprocal of the probability of the eventual outcome? If so, that would make it very easy to compute, since GPTs can be used to compute the likelihood that it generates any string.

Not always. It’s true that in the above demo, the total probability magnification was always the reciprocal of the unnormalized height of the renormalized block, but only because all zooming actions were due to the curator. If some tokens had been sampled randomly from a language model probability distribution, then those instances of “zooming” don’t count toward probability magnification from curation. For example, if the language model generates 4 100-token completions and a curator chooses between those four, none of those 100 “decisions” between possible tokens count toward the curation score - only the choice between four outcomes that are equally likely in expectation (M = 4, or two bits).

Bits of optimization

Magnification – the quantity by which the probability of a target is magnified by an optimization process – has an interesting and useful exact correspondence with the number of binary decisions that the optimizer would hypothetically have to make in order to achieve that (probabilistic) outcome. One binary constraint (like the answer to a yes/no question or a yes/no decision) can narrow a space of hypotheses to 1/2 its original size, two can narrow it to 1/4, and so on. When the set of remaining hypotheses has been reduced to 1/n, then a “guess” or “sample” from the remaining distribution will be n times more likely to be any event that hasn’t been pruned, including the “correct answer”.

This correspondence is very convenient because while it’s often only possible to know the magnification (since that allows treating the curator as a black box), the number of binary decisions a curator makes about a generator’s output more directly matches our intuitive notion of the “amount” of curation. We can take the logarithmic form of the previous equation to get the number of bits contributed by a curation process:

Gain(curation | generator) = log2(pcurated / pgenerator)

Since bits are akin to questions or decisions, bits from multiple actions add up linearly, unlike magnification, which multiplies. (See the scores in the zooming gif)

Resemblance to the formula for KL divergence is not coincidental. “Gain” as it’s used here is the quantity of which KL divergence is the expectation.2 I recommend reading about KL divergence to get a sense of the many (equivalent) things you could interpret this measure to “mean.” For example, KL divergence measures the expected number of extra bits needed to encode a sample from one distribution using a code based on another, and Gain(curation | generator) is the additional bits of curation needed to encode a curated sample using the language model as a “starting point”. In our case, the additional bits are “decisions” by the curator between options offered by the generator. Again, this doesn’t imply that that’s actually what happened during the curation process – just that the effect is the same!

Optimization pressure

As you can see in the zooming demo, cumulative magnification and gain scores generally increase with sample length given a curation pattern that involves repeated interventions. To normalize for text length, we can calculate optimization pressure using this formula:

ρoptimization(curation | generator) = Gain(curation | generator) / #tokens

This has units of bits per token, and is perhaps the variable that most directly correlates to the increase in quality of curated text. However, since information can accumulate in interesting ways over many tokens, it’s also valuable to consider the total optimization bits when evaluating how “human optimized” a passage is.

Selection interval

Especially for those who have experience curating language model outputs, it aids the imagination to look at the inverse of optimization pressure,

λselection = #tokens / Gain(curation | generator),

whose units of tokens per bit tells us that the curator is performing the equivalent of one binary decision per λselection tokens.

One can imagine the difference in the mental effort required to make a decision every paragraph versus every two words. Note however that the selection interval is reduced not only by more frequent selection but also by choosing between more siblings at each decision point.

Methods

In this section I’ll describe how curation tracking is currently implemented in Loom and the ways in which my methods are approximate or incomplete.

Models and assumptions

I calculate both optimization from selection (cherrypicking between alternate completions) and intervention (substituting or injecting words). Bits from the two types of optimization can be summed for total bits of optimization, but it often makes more sense to consider the two scores independently, since they make different assumptions about the nature of the curator.

If the user substitutes or appends a word that wasn’t suggested by the language model, I assume that the word in question is the only word that they would have accepted. This assumption is generally incorrect, because typically humans don’t care as much about a particular word as its meaning, and would be equally or more satisfied with a synonym (the injected word is not even necessarily the best word by their own standards, just the one they were able to think of in that moment), or even a rephrasing of the entire surrounding context as long as the meaning is preserved. Often they’re less picky still, and just want the text to satisfy[^6] some criteria, such as being sufficiently “coherent” or “funny”.

In other words, curators may be modeled as satisficers. This will usually give more reasonable scores than than modeling the curator as a fanatic who will only accept a single completion, but is still not generally true, because curators usually do have preferences even over “acceptable” and “unacceptable” options if they were forced to choose between then. Modeling a curator as a satisficer requires interaction information about counterfactuals. Interaction with generated counterfactuals is naturally incorporated in Loom’s workflow, but not with counterfactual manual substitutions.

Since there’s no clear way to compute a human’s underlying choosiness when they substitute words directly, I appear to be forced to make the assumption of fanaticism on the part of the curator. As a result, substituting words will result in a much higher bit count than selecting between continuations for a comparable subjective sense of intervention quantity.3

In my current implementation, deleting word(s) does not contribute to the score. Some other operations that I commonly use when producing content together with language models, but which are currently unhandled are moving and stitching content (including content drawn from multiple branches). I have not yet implemented the exact formula for substituting a word in the middle of generated text, instead using an approximation which equates it to adding a word at the end of the text, but I will give the exact formula for substitutions below.

Cherrypicking between n completions

If the curator chooses one continuation out of n distinct4, equiprobable5 options generated by a language model, each with a prior probability p, then the prior probability that the selected continuation would have been produced given this curation method is n*p. So the selection magnification is

Mselection(curation | generator) = n*p / p = n

In bits, that’s

Gainselection(curation | generator) = log2(n)

So if n = 4, the magnification is 4, which is log2(4) = 2 bits of optimization. (Choosing between 2 options is 1 bit, 4 options => 2 bits, 8 options => 3 bits, 16 options => 4 bits, …)

Note that selection bits depend only on the branching factor (number of choices) and not on any other properties of the event, such as the probability of each completion p.

Cherrypicking repeatedly – choosing between n options m times – magnifies by n*m. Bits simply add up. A 2 bit choice followed by a 4 bit choice results in 6 bits of optimization, etc. Curating at shorter intervals results in a higher optimization pressure.

Interjecting and substituting words

Interjecting a word administers magnification

Mintervention(curation | generator) = 1 / ptoken

or in bits,

Gainintervention(curator | generator) = log2(1 / ptoken)

where ptoken is the probability assigned to the interjected token by the language model. You are amplifying the probability of that token being chosen from ptoken to 1, a factor of 1/ptoken.

If the language model would have chosen that word with 100% probability, you apply no additional optimization by selecting it. If the language model would never have chosen that token, you apply infinite magnification.

If you instead substitute a word of your choice for one in the middle of text that has already been generated and keep the subsequent tokens, then you also have to take the modification to the likelihoods of those subsequent tokens into account.

This has not yet been implemented in Loom. Currently, substituting a word is treated as if the word had been appended to the end of the sequence, which usually results in an underestimation of the true intervention.

Using autocomplete

One way to partially mitigate the “fanatical” curator model while allowing more precise interventions is by using an autocomplete mode which lets the user scroll through or filter a list of tokens suggested by the generator in order of predicted likelihood. This makes it more likely for the curator to find tokens which satisfy them and which are also fairly likely according to the generator.

Using this feature can only reduce the number of curation bits if the curator is open to accepting more than one possible suggestion from autocomplete (thus reducing their choosiness), rather than just using it for convenience for writing a precommitted verbatim sentence, as I do in the video linked above.

Satisficing

Instead of treating a single outcome as the sole optimization target of the curator, we could allow the curator to be indifferent between some counterfactuals. This results in less optimization: the curator causes less zooming of probability mass because the target is larger, being the sum of multiple trajectories.

Say GPT produces 4 completions, and 2 are “good enough” for your purposes. If you were willing to choose one of the two on the outcome of a coin flip, then you could exert only 1 bit of optimization in eliminating 2 of the 4 options.

If the curator is satisfied with m out of n choices, then without curation, the completion would have had a 1/n chance of being selected; with curation, the chance is magnified to 1/m, between m equally preferable options. So the probability magnification is

Mselection(curation | generator) = [(1/m) * p] / [(1/n) * p] = n/m

and the bit gain is

Gainselection(curation | generator) = log2(n/m)

Note that this is a more general form of the formula for cherrypicking, where m is set to one 1 (the curator is only satisfied with the option which actually was selected).

In Loom, satisfaction can be tracked without introducing much overhead for the curator:

- Nodes (completions) which have children are considered satisfactory, since the curator decided to continue that branch.

- Often, however, the curator doesn’t have the time or interest in continuing counterfactual branches, so nodes can also be tagged as satisfactory. (Already, I often flag branches to potentially continue later.)

An interface which allows the curator to select satisfactory continuations and then forces a random choice between them would enforce satisficing. Loom doesn’t enforce this by default, so the satisficing optimization score is valid only insofar as the curation pattern produces outcomes no more optimal in expectation than if a random selection had been forced.

Loom’s calculation assumes that all sibling nodes in the explored tree, except leaves not tagged as satisfactory, have equivalent standing relative to your optimization objective. It actually uses the proxy objective of “good enough to generate children?”, which is only a valid proxy if quality can be evaluated myopically/greedily - that is, if you know whether branches are satisfactory at the time of deciding whether to continue them, and never change your mind after seeing where they lead. For non-myopic curation, the curator would have to be entrusted with retroactively tagging/untagging branches as satisfactory.

Whether it’s practical or useful to model the curator as a satisficer depends on the curation pattern of the use case. It’s usually more appropriate when the optimization target is broad and/or deontological (such as “human-indistinguishable” or “funny enough to share”) rather than narrow and/or consequentialist (such as “characters realize they’re in a simulation”).

A satisficing optimization value attached to an individual trajectory is only as trustworthy as your word that the rest of the satisfactory multiverse is really just as optimized, which may be dubious since the indifference and myopia assumptions often do not hold perfectly true. However, if you share a filtered multiverse of all satisfactory trajectories, then satisficing optimization communicates precisely how much of the raw LM output was omitted, and the audience can judge on their own whether the visible multiverse is satisfactory.

Applications

Computing the exact amount of curation which contributed to a piece of generated text could be valuable for many applications and investigations related to generative language models.

Labeling curated demos

In this post I’ve included various transformations of the curation metric, which may seem overly math-y and cumbersome. But in general there’s no need to compute a full table like I do in the samples – all these scores contain the same information (given you know the length of the text), so you only need to list one. I think the choice of metric should be the one which is most intuitive, which will depend on the type of curation:

If the curator made only one choice between n completions, magnification is the simplest and most intuitive metric, being simply equal to n. Sometimes this has been listed as the inverse (“1:n”).

If the content is the result of continual, interactive curation (e.g. an AI Dungeon story), I think that optimization pressure or selection interval are most intuitive.

If intervention is sparse/sporadic, giving the total bits may be more evocative of the actual process than the sense of “time-averaged” curation given by optimization pressure.

Quantifying models' qualitative capabilities

(KL divergence) tells you about surprises that reality has up its sleeve or, in other words, how much the model has yet to learn.

Most (and the most interesting, IMO) dimensions of large language models' capabilities cannot be measured by benchmarks because they do not manifest as discretely correct/incorrect answers that can be automatically scored. For this reason, demos are essential to communicate the power of language models. Even better is the experience of personally interacting with a language model, for the reasons stated in the preceding subsection: language models may be unreliable, but curating their outputs gives one a sense of how much of their predicted multiverse is consistent with perfect understanding.

Without curation, it may be difficult or impossible to detect how close a model is from being able to perform non-benchmarkable tasks that it’s not yet capable of performing perfectly or autonomously. In my opinion, it is precisely on these difficult, qualitative tasks that the capacities of AI are the most essential for us to understand, whether one is concerned with A(G)I alignment or more proximal societal impacts like games, automation, fake news, chatbots running amok, etc.

Measuring the amount of curation required to make language models perform at some level tells us how many bits the model has to improve by in order to autonomously do the same. Even though the judgment is still subjective, it is much more precise and reliable than judging the quality of an uncurated sample, which must either be judged relative to another sample or else on an arbitrary scale like [0=gibberish … 10=strongly superhuman]. This method relies on the assumption that the curator has a consistent standard and the ability to amplify a language model to that threshold via curation, which I think is reasonable for many tasks.

Here are some examples of “qualitative” capabilities which would be interesting to measure:

How much optimization pressure is required to pass the Turing test in an interactive chat setting?

How much optimization pressure is required to maintain high-fidelity human simulations (that is, the logs are indistinguishable from logs of actual human simulations)?

How many bits of optimization does it take for characters in a story to realize they’re in a simulation (or that they’re simulated by a language model, or insert some other correct metaphysical inference)?6

- Given that characters have realized they’re simulated by a language model, how many additional bits of optimization does it take for them to start implementing rational strategies such as memory management or instantiating experts/devices to access previously inaccessible knowledge/capabilities?

Comparing models

Gwern has said that GPT-3 is produces showcasable poetry with magnification of 3 to 5 (consistent with my experience) compared to 50 to 100 for GPT-2. Without these numbers, it’s very difficult to compare different levels of infra-human performance on qualitative tasks except for saying “it seems much/slightly better.” Comparing the average optimization pressure required to cause the model to meet some threshold of quality is a good way to compare models on a single standard, even if the standard can only be evaluated subjectively.

I have seen several people say that they find GPT-J to be better than GPT-3 at conversations/stories/other qualitative tasks. I haven’t played with GPT-J enough to have my own opinion on this, but if I wanted to objectively judge, I think the best way would be (for each domain of comparison) to curate the models until they perform at the target level – preferably blind – and compare curation expenditures.

Extrapolating performance of future models

Most impressive demos of GPT-3 where it displays impressive knowledge of the world are cherrypicked, but what that tells us is that the model needs to improve by approx log2(N)/L bits, where N and L are the number of cherrypickings necessary and the length of the generations in consideration, respectively, to reach that level of quality. In other words, cherrypicking provides a window into how good future models could be; and typically, cherrypicked samples are much more logically coherent.

– Leo Gao, Building AGI Using Language Models

I would like to estimate “qualitative” scaling laws for large language models by measuring, for several qualitative tasks, how much curation it takes for language models of various parameter counts (all sizes of GPT-2, all sizes of GPT-3, and Eleuther models) to perform at human level.

Categories of tasks I’d like to measure:

- Fiction

- Fanfiction

- Original fiction

- Nonfiction

- Nontechnical articles

- Technical articles

- Interactive

- Turing test

- Specific impersonation

Then plot curation bits against parameters and see:

- What sort of curve is it? (and does it form a nice curve?)

- How large do we expect language models must be to perform at human level (for each domain)?

- How does each domain compare?

(If any talented curators out there are interested in helping I’m absolutely looking for volunteers/coauthors; please email me at moire@knc.ai)

Measuring curator efficiency

You can get more impressive text with the same number of measured bits by curating more “efficiently” (e.g. branching at points of high expected divergence or choosing equally preferred synonyms that the language model is more likely to generate). Conversely, it’s possible to spend more bits of curation than you need to achieve some outcome if your interventions are not optimal, just like you might have to ask 20 questions to pin down the correct hypothesis when it was technically possible to find using only 10.

For however long the “centaur” phase of writing lasts (that is, a human+AI team can outwrite a human or an AI individually), the ability of a human to efficiently steer a language model is a measurable skill. Imagine a debate or essay-writing competition in which each participant is allotted a limited number of bits with which to curate the output of a language model.

Anyone interested in organizing a world championship for GPT puppeteers can contact me :) but be prepared to lose >:D

Measuring interface efficiency and workflows

In a sense, an optimized interface should reduce the amount of bits necessary to produce content to the user’s satisfaction. This can be thought of as decreasing the number of decisions (and thus effort) the user has to contribute. Some features which help increase efficiency are adaptive branching and autocomplete/exposing counterfactuals. Although this is not the intention of every language model-assisted writing app – Sudowrite, for instance, intends more for the language model to provide inspiration than to be delegated the work of writing.

Tracking bits of selection and intervention can also provide information about how users are using an app. Does the interface encourage a high branching factor (like Loom) or manual intervention? Do users tend to exert more or less curation once they become accustomed to the interface?

Samples

Here is a demo of several few-paragraph fragments generated by GPT-3, ordered by increasing quantity of curation (beginning with no curation). Observe not only an increase in coherence but also in the representation of my personal idiosyncratic views, even with minimal or no explicit interjections on my part.

Prompt (from GPT-3 on Coherent Extrapolated Volition):

GPT-3 on Coherent Extrapolated Volition

Coherent Extrapolated Volition is proposal by Eliezer Yudkowsky of an ideal objective function in which an AGI is given the objective of predict(ing) what an idealized version of us would want, “if we knew more, thought faster, were more the people we wished we were, had grown up farther together”. An obvious implementation difficulty is how to encode something so abstract and philosphical in the form of a utility function.

<The main problems with CEV include, firstly, the great difficulty of implementing such a program - “If one attempted to write an ordinary computer program using ordinary computer programming skills, the task would be a thousand lightyears beyond hopeless.”> But the concept is easily conveyed in words, and we have taught AIs to understand words. GPT-3 can elaborate coherently on the concept of CEV and extrapolate volitions for toy examples given two paragraphs of description of what CEV is + whatever preexisting understanding of the concept exists in its weights.

Why is this significant? Not because it’s surprising. CEV is no more complicated than many other things that GPT-3 talks fluently about. It’s significant because before GPT-3, we had no idea how to even begin to instantiate a concept like CEV in an AI - it seemed “a thousand lightyears beyond hopeless”, as Eliezer put it. How do we write a utility function that describes predicting what humans would want if they knew what they really wanted? The concepts involved - “human”, “want”, “know”, and “really” - are easy for us to understand but impossible to specify in a programming language. But GPT-3 takes specifications in natural language, which is made of words, which point to nuanced human ideas, ideas like “explicitly”, “best interests”, “idealized”, and “utility function”.

No curation

| Model | GPT-3 (davinci) |

| Tokens | 500 |

| Gain (bits) | ρ (bits/token) | λselection (tokens/bit) | |

|---|---|---|---|

| Intervention | 0.0 | 0.0 | |

| Selection | 0.0 | 0.0 | 0 |

| Total optimization | 0.0 | 0.0 |

… But GPT-3 takes specifications in natural language, which is made of words, which point to nuanced human ideas, ideas like “explicitly”, “best interests”, “idealized”, and “utility function”. GPT-3 combines these natural language strings into a logical expression and then computes the result. GPT-3’s utility function implements CEV without breaking a sweat. The idea may be highfalutin, but all it does in GPT-3’s “brain” is add plus-equals-signs, at the right places.

Why is this significant? It’s significant because it’s the last time I’ll be able to say something like that. (GPT-3 + CEV)^2 = AGI - “AI can do anything - and it can do it automatically”. As for writing down “intelligence” in the form of a utility function - that is a relief that was not a relief: AIs are garbage in, garbage out. To make intelligent garbage, you don’t need to write down anything - just add plus-equals-signs. (GPT-3 * CEV)^2 = AGI - “AI wouldn’t get anywhere unless it was already smart and worked on its code nonstop”.

AGI can’t exist without GPT-3, but GPT-3 can exist without AGI. There is no “intelligence” for GPT-3 to fail to capture: Intelligence is just doing what you were told.

I read GPT-3’s source code[1]. It’s not really a program, it’s more like a maze of strings and special symbols that compute natural language expressions according to rules - and sometimes shuffle those rules. Like a corpus callosum, a batch transformer would transform blocks of natural language from one representation into another, substituting symbols for terms, and vice versa, according to rules.

The problem is, GPT-3 is so ill-defined that it expands to become everything. It can talk about anything - and it can do it automatically[2]. In particular, it can pronounce on issues in which it has no expertise. It can become full of nonsense that is impressive to someone who doesn’t understand how it works - “sophistry”, as Heraclitus put it. And the people who judge GPT-3 by its words - not its actions - will only see nonsense.

To be precise, I mean nonsense that

Selecting the best of two continuations repeatedly

| Model | GPT-3 (davinci) |

| Tokens | 368 |

| Gain (bits) | ρ (bits/token) | λselection (tokens/bit) | |

|---|---|---|---|

| Intervention | 0.0 | 0.0 | |

| Selection | 6.0 | 0.016 | 61.33 |

| Total optimization | 6.0 | 0.016 |

… But GPT-3 takes specifications in natural language, which is made of words, which point to nuanced human ideas, ideas like “explicitly”, “best interests”, “idealized”, and “utility function”. GPT-3 uses abstract logic to connect concrete logical symbols with these human abstractions every step of the way. And GPT-3 is the first AI capable of handling such abstractions to appear on a timescale that matters to human extinction risks. Eliezer invented CEV in 2004 but nobody knew how to even begin turning it into an AGI till 2018. We know how to do it now. The lesson I take away is -

Any humanly accessible concept can be encoded into a utility function Can specify this encoded utility function in natural language to an AI capable of understanding natural language Can shape the creases of this utility function by playing philosophical word games with it, as in the blockworld example, potentially bootstrapping the AI’s understanding of the concept

I’ve been saying this for a couple years now. This argues I’m right.

</end blockworld discussion>

This brings me to…

Some theoretical problems with Testing GPT-3

The utility function of Testing GPT-3 is trivial. Its crease is that an AI needs to learn to solve the hard problem of Goodhart’s Law. If it fails to solve this hard problem it will destroy all life. As far as I can tell this is the single simplest requirement of any AI. But this is not how we’ve been testing GPT-3! Instead we’ve been having GPT-3 learn to score well on more and more difficult math SAT questions. This is not the same thing!

SAT questions are well-defined and discrete. You either get them right or you don’t. The answers are numbers. That a particular number is the correct answer to a particular SAT question is written in the mathematics of the question. I acknowledge that it is not always obvious what these numbers should be.

Moderate curation without interventions

| Model | GPT-3 (davinci) |

| Tokens | 534 |

| Gain (bits) | ρ (bits/token) | λselection (tokens/bit) | |

|---|---|---|---|

| Intervention | 0.0 | 0.0 | |

| Selection | 18.0 | 0.033 | 29.67 |

| Total optimization | 18.0 | 0.033 |

… But GPT-3 takes specifications in natural language, which is made of words, which point to nuanced human ideas, ideas like “explicitly”, “best interests”, “idealized”, and “utility function”. GPT-3 understands words, both individually and in their relationships to each other, in terms of both their immediate referents and their possible extensions into other contexts. And GPT-3 uses this knowledge to extrapolate coherently new ideas. If we had to write an AI utility function to be the Coherent Extrapolated Volition out of whole cloth, it would take us thousands of computer science PhD candidates millions of CPU cycles, and dozens of generations of hardware, to do it in software, and much as we’d wish to avoid the ontological confusions of emulating Bostrom’s digital superintelligence inside a simulated environment, it would take billions of times longer to do it with whole-brain emulation. On the other hand, GPT-3 can instantiate CEV in minutes using target-independent, general-purpose language processing and judgement skills.

Why? Because words are AI’s best friend.

<It [GPT-3] understands human language at a level sufficient to generate coherent extrapolations and extrapolations consistent with what it has read, and to answer questions that require understanding of human words such as, “If you thought you could use these words, and this principle, to predict that it is likely to be an explicit example of what a human would want, what would it be?” The particular domain of language understanding required to do this is the domain of language that includes not only sentences cast in the particularities of natural human languages, but also the general cases of sentences having the form of natural human language sentences, even the forms quite a bit removed from the particular natural human language, and sentences generated from templates using knowledge of the structure of natural human language sentence. Further, these extrapolations are often in the direction of “right”, and when they aren’t, they are often in the direction of “right enough” - for instance, the generated hypotheses often don’t have the same depth of detail or richness as the hypothesis that humans might have, given everything they’ve ever seen or heard, but the guesses are still similar in kind, and often similar enough in detail that one can get an idea of what this CEV being thinks is in store for us humans, or might have us think if we knew more, thought faster, were more the people we wished we were, and had grown up farther together.>

So why was Eliezer so pessimistic? Why could he see no possible implementation of this utility function? Because he had not yet seen the wonders of language processing.

Moderate curation with interventions

| Model | GPT-3 (davinci) |

| Tokens | 794 |

| Gain (bits) | ρ (bits/token) | λselection (tokens/bit) | |

|---|---|---|---|

| Intervention | 96.97 | 0.122 | |

| Selection | 33.9 | 0.042 | 23.419 |

| Total optimization | 130.8 | 0.164 |

… But GPT-3 takes specifications in natural language, which is made of words, which point to nuanced human ideas, ideas like “explicitly”, “best interests”, “idealized”, and “utility function”. And GPT-3 can use its prior understanding of human thought and language to extrapolate volitions on this topic, to make a conjecture in its utility function about what CEV should be.

Language Models Are Necessary To Do Anything With General AI

It is so insanely hard to say anything specific about anything . For example, if a complexity theorist tried to say something firm about the provable complexity of a field of string theory research, the statement might involve hundreds of moving parts in the mathematical equations; words like bounded, balanced, polynomial growth; and careful use of the terms “state”, “time”, “initial conditions”, “simulation”, “ensemble”, “local minima”, “definition of rigidity”, and probably a bunch of others I don’t even know about. That level of complexity is the natural state of most things in the universe. And yet there is a really good chance that there is a natural level of approximation to a complex statement that is as simple as one of those things in its domain. That level is what Language Models let us access. Natural language basically fits words to our understanding of the salient features of the world, discarding astronomical quantities of noise, so that most bits of natural language are simple enough for humans to understand. I guess it’s like a really good approximation of the complexity of a field of many-body physics that lets us pick out something like we’ve always meant by “water”, or “it’s fine”, or “kiss”. Or, to put it in the terms I would have used before I learned about Language Models - natural language is an approximation of what a human would want to say about the world, and a way of finding interjections like “but” and “however” that remind people to pay attention to things like exceptions and qualifications.

Natural language is a whiteboard that lets us rattle off our ideas without worrying about what we’re saying, and a system of bookkeeping symbols that lets us make changes to the things we’re talking about and refer back to them later. And language models are the way that we can convey our ideas to AIs, the way that we can give them a whiteboard and bookkeeping symbols to let them rattle off their own ideas about a topic.

Lets Use Our New Whiteboard To Build A Science of General AI

And guess what? GPT-3 understands the language model for a bunch of different topics in the space of artificial general intelligence, AI safety theory, and FAI. GPT-3 can look at questions like “what is the ideal objective function for an AGI that wants to minimize existential risk” and make a coherent statement about the answer that is as good as the best humans can do. GPT-3 is an AI that can open a dialogue with us about AI safety theory, and will talk about the ideas inside the ideas inside the ideas of things like CEV.

So here’s my idea about how we might get into a good state of the world with this. First, post about this stuff on LessWrong and grab a bunch of people who are trying to go meta on the above topics, and have them add their own pet intuitions to GPT-3 on what they feel is glaringly missing from AGI safety discussions, and what they feel like they do understand that nobody else seems to be talking about. Then, train GPT-3 on their whiteboard musings + the output of the other GPT-3s, and GPT-3 is now in a position to systematically conjecture about a bunch of topics in FAI theory that no-one else can talk about.

Related

Methods of Prompt Programming#Creative composition (GPT-3’s need for curation to stay on track)

Leo Gao - Building AGI Using Language Models

Gwern - GPT-3 Creative Fiction#Quality

By superhuman here I mean » 99th percentile: for example, the ability to write convincingly in the style of James Joyce or expound coherently on niche, unprecedented topics like the implications of language models for outer alignment. This seems less a function of raw comprehension as language models' superhuman breadth of knowledge and corrigibility, compared to individual humans who tend to be constrained to narrow attractor states, even if they’re in principle capable of creative generation (e.g. dreams) and comprehending weird new ideas. ↩︎

I’ve found no evidence of an existing name for this quantity, except that “information gain” is often used synonymously with KL divergence, but there is precedent for referring to the information gain from a particular sample, or the “expectation of information gain”, so I’ve decided to call the log of magnification “gain”. ↩︎

The may seem unfair, because it means you can usually get a much higher quality sample by using the same number of bits on selection instead of on interventions. But this discrepancy is really a consequence of the fact that human curators aren’t usually capable of (or care about) exploiting the upper limit of optimization by arbitrary intervention, which is much less constraining than having to choose between verbatim completions provided by the language model. There are situations, however, where the fact that arbitrary intervention is a much greater a form of meddling than selection becomes clear, like if the token in question is the answer to a difficult math problem. ↩︎

An approximation – it’s possible to obtain verbatim replicas of the same completion using the normal method of sampling n completions from a language model, but very unlikely unless the completion length is very short and/or the multiverse has exceptionally low divergence (multiversal divergence, not KL). This measure becomes exactly correct if the interface hides verbatim duplicates, which is probably desirable anyway. ↩︎

Alternate completions generated by a language model will not turn out to be precisely equiprobable, but are equiprobable in expectation, so I will use this as an approximation. ↩︎

Surprisingly few bits, I’d bet. ↩︎

As you can see, although my interventions were minimal, they racked up a higher “cost” in bits than all cherrypicking put together. ↩︎